Project Deliverables: Definition, Examples, Templates

Project deliverables are the artifacts a project produces and a stakeholder formally accepts. The pattern sounds simple. The execution rarely is, because most projects conflate outputs (what got built) with deliverables (what got accepted), name them too vaguely to be testable, and skip the acceptance step that turns work into a closed deliverable.

This guide covers what deliverables in project management actually are, using PMI's canonical framing. It walks through examples by project type and audience, and the four-way distinction between deliverables, milestones, outputs, and outcomes that the SERP rarely owns cleanly. It also covers how to define deliverables that survive review, and the acceptance criteria pattern that keeps "done" from becoming opinion-driven.

Quick answer: what project deliverables are

A project deliverable is a unique and verifiable product, result, or capability formally accepted by a stakeholder against agreed acceptance criteria. Every deliverable is an output; not every output is a deliverable. The distinction is the formal acceptance: an output the team built that nobody has signed off on still carries scope-change risk and rework potential, regardless of how finished it looks.

Most project deliverables fail at definition, not at execution. The most common failures: vague names ("marketing report"), no single owner, no specified format, no acceptance criteria, and no scheduled review point. Each of these is a structural issue solvable upstream of the work.

The builder above outputs a starter list by project type so the conversation about which deliverables matter has somewhere to start. The remaining sections cover the structural pieces in detail: definition, examples, the four-way distinction, how to define them, and acceptance criteria.

The PMI definition (and what it means in practice)

The Project Management Institute's PMBOK Guide gives the canonical definition. It is worth reading carefully because three words in it carry most of the load.

"A deliverable is any unique and verifiable product, result, or capability to perform a service that is required to be produced to complete a process, phase, or project." - PMI PMBOK Guide

The three load-bearing words are unique, verifiable, and required. Unique means a deliverable is a specific named artifact, not a category. Verifiable means there is an objective test for whether the deliverable was produced; not an opinion-driven judgment. Required means the deliverable is in scope, on the project charter, agreed by stakeholders before work began.

Most deliverable definition failures fail one of those three tests. "Strategy document" is not unique because it could be a 1-page memo or a 60-page deck. "Better customer experience" is not verifiable since there is no objective test. "A QA test plan" is not required if it was never in the charter and the team adds it during execution.

Reading the PMBOK definition with those three words highlighted is the cheapest discipline available for cleaning up vague deliverable lists.

Project deliverables examples by type

Most articles list 5 to 7 generic deliverables. The cleaner organizing structure is two axes: who the deliverable is for (internal vs external) and what form it takes (tangible vs intangible). Every deliverable falls in one quadrant of this matrix; the quadrant determines how to format it, who reviews it, and what acceptance looks like.

| Type | Internal (team-facing) | External (client / stakeholder-facing) |

|---|---|---|

| Tangible | Test plans, code repositories, backup configurations, internal dashboards, process maps | Production deployments, signed contracts, design files handed off, printed marketing collateral, finished software releases |

| Intangible | Working sessions, internal training, knowledge transfer, decisions documented in writing | Client presentations, kickoff meetings, customer onboarding sessions, advisory recommendations |

External tangible deliverables are what most people picture when they hear "project deliverable": signed contracts, production deployments, finished design files. External intangible deliverables are real deliverables despite leaving no physical artifact. Client presentations, customer onboarding sessions, and advisory recommendations all qualify. The discipline is producing a written summary stakeholders sign off on, even when the work itself was a meeting.

Internal deliverables are the ones most often skipped or undocumented. Test plans, knowledge transfer sessions, internal process maps, and decisions captured in writing are all deliverables in well-run projects. They support the work but rarely receive the same scrutiny as external deliverables. That asymmetry is why internal deliverables tend to get cut first when budgets tighten and missed last when projects fail.

Deliverables vs Milestones vs Outputs vs Outcomes

The single biggest source of confusion in project deliverables writing is conflating four related concepts that mean different things. Most SERP top results distinguish deliverables from milestones cleanly, then quietly drop outputs and outcomes from the conversation. The full four-way comparison is the version that prevents most stakeholder misunderstanding.

| Concept | What it is | Example | Owner |

|---|---|---|---|

| Output | What was produced. The work that came out of activity, regardless of whether it was accepted. | "We built a working signup flow" | The team executing |

| Deliverable | An output formally accepted by a stakeholder against agreed criteria. Every deliverable is an output; not every output is a deliverable. | "The signup flow was reviewed and signed off by the product lead" | The accepting stakeholder |

| Milestone | A time marker on the project plan. Carries no artifact by itself; usually anchored to a deliverable's acceptance date. | "Beta launch milestone hit on July 15" | The project manager (planning) |

| Outcome | The behavior change or business result the deliverable was meant to drive. Measured after delivery, not at delivery. | "Signup conversion increased from 12% to 18%" | The business sponsor |

The output-versus-deliverable distinction matters in week-to-week reporting. A status update saying "we shipped the new dashboard" describes an output. A status update saying "the new dashboard was reviewed and signed off by the head of customer success" describes a deliverable. Counting outputs as deliverables inflates perceived completion and lets unaccepted work pile up unnoticed until the project closeout review surfaces a half-dozen pending acceptances at once.

The deliverable-versus-outcome distinction matters in how projects are evaluated. Shipping a customer feedback dashboard is a deliverable; the customer success team using it to cut average response time is the outcome. Many projects ship every deliverable on time and produce no measurable outcome because nobody owned the behavior change after the deliverable landed.

"Shipping is a feature. A really important feature. Your product must have it." - Joel Spolsky, in Joel on Software

Spolsky's point cuts both ways. The team that produces 12 outputs and accepts none of them has not shipped, even if they have been busy. The team that ships 8 deliverables and measures zero outcomes has shipped, but the project has not yet justified itself. Both halves are necessary.

How to define deliverables that actually land

The discipline of deliverable definition lives upstream of execution. A deliverable defined badly at project charter stage does not get fixed during the work; the work goes on, the definition stays vague, and the acceptance review at the end becomes a renegotiation. Five steps prevent this from happening.

- Name the deliverable concretely "Marketing report" is not a deliverable; it is a category. "Q3 paid-acquisition performance report with channel-level CAC, ROAS, and recommendation memo for Q4" is a deliverable. The discipline at this step is forcing yourself to write the noun phrase a stakeholder could recognize on sight.

- Assign a single owner Multiple owners equal no owner. The deliverable owner is the one person accountable for it landing, even if the work is shared. Use a RACI matrix if accountability is genuinely contested across teams; use a name and date if it is not.

- Specify the format and final form A 30-page deck and a 1-page memo are both "the report," and they cost different amounts. Specifying format upfront prevents the late-stage scope expansion where the deliverable doubles in size without budget moving. Format includes length, channel, and tools (deck vs doc vs dashboard).

- Write the acceptance criteria Every deliverable needs the test for done. Four criteria worth running each candidate against: specific (clear scope), measurable (objective check), testable (someone can verify), agreed (signed off by the accepting stakeholder before work starts). Without acceptance criteria, "done" becomes opinion-driven and the deliverable bleeds revisions.

- Set the cadence and review point When will the deliverable be reviewed, by whom, with how much advance notice? Most deliverable failures happen in the gap between "almost done" and "actually accepted." Schedule the review explicitly when the deliverable is defined, not when the work is finishing.

The order matters. Naming concretely surfaces scope ambiguity that vague names hide. Single ownership prevents joint-accountability fragmentation. Format specification prevents late-stage scope expansion. Acceptance criteria prevent opinion-driven review. Cadence prevents the gap between "almost done" and "actually accepted" from absorbing the project's last week.

Acceptance criteria: the 4-test pattern

Acceptance criteria are the test for "done." Without them, the reviewer's judgment is the test, which produces the predictable conversation where the reviewer says "this is not what I expected" and the team says "this is what we agreed to build." Both are technically right because nobody wrote down the test.

Four tests run against any candidate criterion separate useful acceptance criteria from theater.

Specific. The criterion describes a clear scope, not a category. "The dashboard shows weekly active users" is specific. "The dashboard provides actionable insights" is not.

Measurable. An objective check exists. "Page loads in under 2 seconds at p95" is measurable. "The page feels fast" is not.

Testable. Someone can actually run the test before review. "All forms validate required fields client-side and server-side" is testable. "All forms work correctly" requires the reviewer to define what correctly means, which puts the test back in the reviewer's head.

Agreed. The accepting stakeholder signed off on the criterion before work started. Acceptance criteria written after work is finished is feedback, not a contract.

For a worked example, take a deliverable named "Q3 paid-acquisition performance report." Its acceptance criteria might be: (1) covers all paid channels active during Q3 with channel-level CAC, ROAS, and spend share; (2) includes a comparison versus Q2 with three insights from the diff; (3) ends with a one-page recommendation memo for Q4; (4) reviewed by the head of growth in the second week of October.

Specific, measurable, testable, agreed. The team and the reviewer both know what done means.

What we recommend

For most teams, the practical move is not "buy a deliverables tool" but "name deliverables concretely, write acceptance criteria upfront, and run the project somewhere the deliverables list and the work against it sit in the same place." A deliverable list that lives in a separate document from the actual work tends to drift; a deliverable list embedded in the project workspace stays current because the team touches it daily.

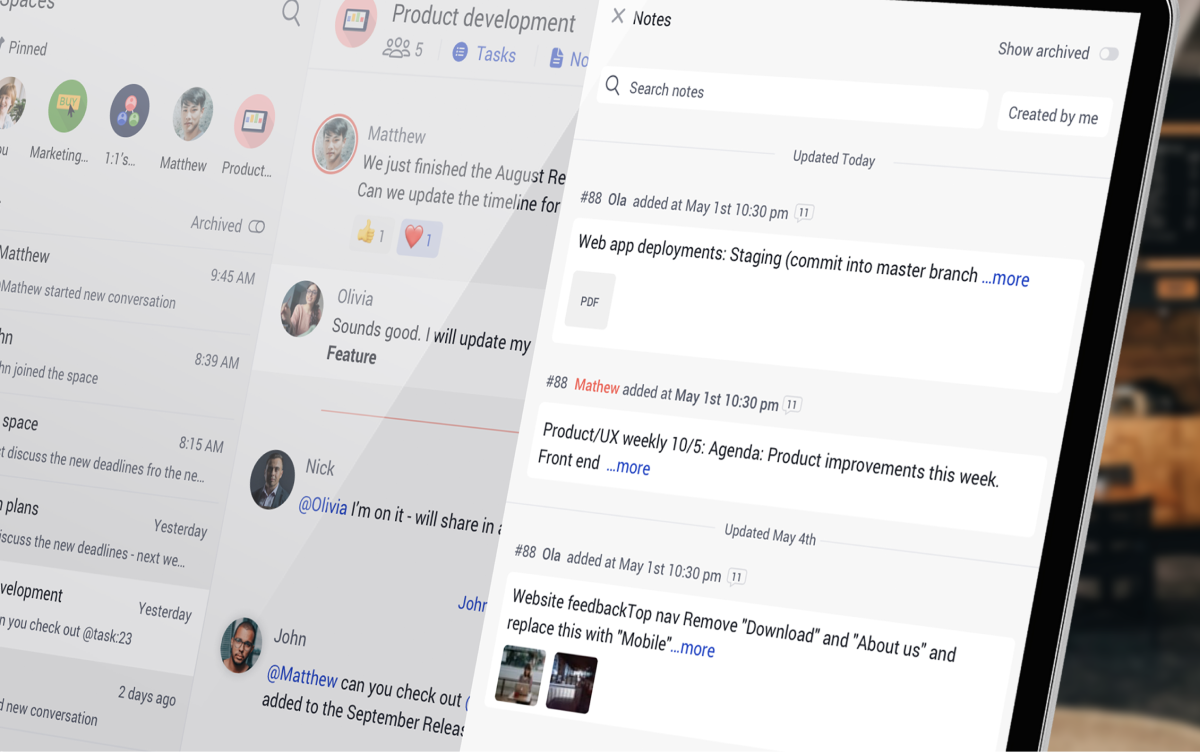

What we do at Rock: chat, tasks, and notes live in the same workspace, so the deliverables list, the acceptance criteria for each, and the conversations about what "done" means all sit next to the actual work. For small teams and agencies running multiple projects without a dedicated PMO, this consolidation matters more than dependency-tracking sophistication. Deliverables fail because they get lost between tools, not because the framework is wrong.

Pair the deliverables list with a project charter at kickoff (locks scope and authority), a project timeline for sequencing, a RACI matrix for shared accountability, and a scope of work template for client-facing engagements. Deliverables are one artifact in a small set; treating them as the whole plan misses the upstream discipline that makes them survive review.

"The bearing of a child takes nine months, no matter how many women are assigned." - Frederick Brooks, in The Mythical Man-Month

Brooks's point applies to deliverables that have sequential dependencies. Some work cannot be parallelized, and adding people to a late deliverable accelerates nothing. The honest version of the conversation is acknowledging which deliverables are sequential, which are parallel, and adjusting the timeline rather than the team.

Common pitfalls

The predictable failure modes when defining or running project deliverables.

- Conflating outputs with deliverables An output is what was produced; a deliverable is an output formally accepted against agreed criteria. Counting every output as a deliverable inflates the project's perceived completion and lets unaccepted work pile up unnoticed until acceptance day. The fix is requiring acceptance criteria upfront for anything called a deliverable.

- Vague names like "marketing report" or "documentation" Generic deliverable names guarantee scope ambiguity. "Documentation" can mean a 2-page README or a 60-page enterprise compliance manual. The work to produce each is wildly different. Force concrete naming at the project charter stage; the awkwardness of writing the specific name surfaces the scope conversation that needed to happen anyway.

- No acceptance criteria Without acceptance criteria, "done" becomes opinion-driven. The reviewer says "this is not what I expected"; the team says "this is what we agreed to build"; both are technically right because nobody wrote the test for done. Roughly half the SERP top results skip acceptance criteria entirely; the half that include them produce projects that finish.

- Multiple owners on the same deliverable "Joint accountability" means no accountability. When the deliverable slips, ownership is contested and nobody is responsible for the recovery plan. Pick one owner per deliverable. Joint contribution is fine; joint ownership is the failure mode.

- Treating closeout as optional Most projects ship the deliverable but skip the formal acceptance. Without sign-off, the work technically is not delivered, future projects cannot reuse the artifact, and the team learns nothing from the cycle. The 15-minute closeout review is the cheapest activity in the project lifecycle and the most-skipped.

Frequently asked questions

What are project deliverables?

Per the PMI PMBOK Guide, a project deliverable is "any unique and verifiable product, result, or capability to perform a service that is required to be produced to complete a process, phase, or project." In practical terms, a deliverable is an output that has been formally accepted by a stakeholder against agreed acceptance criteria. The accepted-against-criteria piece is what distinguishes a deliverable from a generic output.

What are examples of project deliverables?

Examples vary by project type. A software build delivers PRDs, architecture diagrams, working code, QA results, and handoff docs. A marketing campaign delivers a strategy document, creative assets, landing pages, tracking setup, and a final ROI report. A consulting engagement delivers a statement of work, diagnostic findings deck, recommendation memo, and knowledge transfer. The Checklist Builder above outputs typical starter deliverables for six common project types.

What is the difference between a deliverable and a milestone?

A deliverable is a tangible or intangible artifact produced and accepted. A milestone is a time marker on the project plan with no artifact of its own. Milestones are typically anchored to deliverables (the milestone date is when a deliverable was accepted), but they are different concepts. The 4-way comparison table above shows deliverable, milestone, output, and outcome side by side.

What is the difference between deliverables and outputs?

An output is what the team produced. A deliverable is an output that has been formally accepted by a stakeholder against agreed criteria. Every deliverable is an output; not every output is a deliverable. The distinction matters because counting outputs as deliverables inflates perceived completion: work that has been built but not accepted still has scope-change risk and rework potential.

What is the difference between deliverables and outcomes?

A deliverable is what shipped; an outcome is the behavior change or business result the deliverable was meant to drive. Shipping a customer feedback dashboard is a deliverable; the team using it to reduce response time is the outcome. Outcomes are measured weeks or months after delivery, not at delivery. Many projects ship deliverables successfully and never measure outcomes.

What are internal vs external deliverables?

External deliverables are produced for clients, customers, or external stakeholders. Internal deliverables support the team or organization producing the work but never leave it. Both are real deliverables and both deserve acceptance criteria; what changes is the audience for review and the format. The 2x2 table above splits deliverables by audience (internal/external) and form (tangible/intangible).

How do you write acceptance criteria for a deliverable?

Run each criterion against four tests: specific (the scope is clear), measurable (the check is objective, not opinion), testable (someone can run the test), and agreed (the accepting stakeholder signed off before work started). Acceptance criteria written after work is finished is just feedback; written upfront, it is the contract that lets the team know when to stop.

How to start this week

Pick the project. Run the Checklist Builder above with the project type to generate a starter deliverables list. Walk through it with the team and the sponsor in a 30-minute conversation; the questions that come up will surface scope ambiguities and accountability gaps you did not know existed.

For each surviving deliverable, write the four acceptance criteria (specific, measurable, testable, agreed) and get the accepting stakeholder to sign off before any work begins. The 30 minutes you spend at definition is the cheapest insurance against the multi-day rework cycle that vague deliverables produce at acceptance review.

Run your project deliverables somewhere the team actually sees them. Rock combines chat, tasks, and notes in one workspace. One flat price, unlimited users. Get started for free.