Most sales dashboards track 12 to 15 metrics across pipeline, activity, conversion, and revenue. The honest answer is that a B2B sales team only needs about six numbers, and one composite metric (Sales Velocity) compresses the whole engine's health into a single signal. Tracking 15 metrics in isolation produces noise; tracking the right six tells the team where to focus next.

This guide covers the six sales KPIs that actually predict revenue, with benchmarks for each. It introduces the auto-calculated Sales Velocity formula that turns four of those numbers into one headline output. The Health Check widget further down lets you plug your numbers in and see your dollars-per-day result.

Quick Answer: What Are Sales KPIs?

Sales KPIs are the small set of metrics that connect sales activity to revenue outcomes. The six that matter for most B2B teams are quota attainment, pipeline coverage ratio, win rate, average sales cycle length, average deal size (ASP), and lead response time. Sales Velocity is the auto-calculated composite that combines four of those into a dollars-per-day output.

"Defining the sales methodology enables the sales training formula to be scalable and predictable." - Mark Roberge, The Sales Acceleration Formula

Roberge's data-driven approach at HubSpot took the company from $0 to $100 million in annual recurring revenue. The key idea behind the six KPIs below is the same. Replace gut-feel with metrics that connect day-to-day sales work to revenue. Track the small number that actually drive outcomes rather than the long list that decorates dashboards.

The shape matters as much as the numbers. Sales metrics arrange in a hierarchy: pipeline coverage feeds win rate, win rate combined with ASP feeds Sales Velocity, and Sales Velocity over time predicts whether quota attainment will land. Tracking a flat list of 15 numbers misses these relationships. Tracking the six below in their proper layers makes the gaps obvious.

The 6 Sales KPIs That Predict Revenue

Each of the six below has a specific job. Together they tell you whether the engine is healthy, where it is leaking, and how much revenue it produces per day. The composite (Sales Velocity) is the headline; the inputs are where the team focuses to move it.

Quota attainment. The percentage of quota the team or rep achieves in a period. Healthy is 80% or higher across the team; below 65% is a process problem, not an effort problem. This is the board-level scorecard; track it monthly.

Pipeline coverage ratio. Qualified pipeline value divided by quota. The healthy band is 3x to 4x; below 2x means the team is mathematically unlikely to hit number this quarter regardless of how good closers are. Watch it weekly. This is the leading indicator that runs ahead of quota attainment.

Win rate by segment. Closed-won deals divided by total opportunities. B2B SaaS mid-market typically lands at 20% to 30%; SMB closes higher (often 30%+), enterprise lower (8% to 15%). Always segment; a blended company-wide win rate hides which motion is working and which is not.

Average sales cycle length. Days from opportunity created to closed-won. The healthy band for B2B SaaS is 46 to 75 days; longer cycles eat working capital, shorter cycles often mean qualification is loose. Cycle length is a velocity input: shorter cycles, all else equal, mean more revenue per quarter.

Average deal size (ASP). Total closed revenue divided by deals won. The SaaS median sits around $26,000 per the latest benchmark data, but ASP varies wildly by segment and motion. ASP is context, not a band; what matters is whether it is moving the way the strategy expects (up if you are moving upmarket, stable if you are scaling SMB).

Lead response time. Time from inbound lead arrival to first sales touch. Healthy is under 5 minutes; the conversion multiplier is real and large. Published research finds responding in 5 minutes vs 30 minutes is a 5x to 21x increase in conversion to qualified opportunity. Most teams know this and still let response time drift to hours. Build the alert into the workflow.

"Lead generation is what drives growth; salespeople are simply a conduit for that growth." - Aaron Ross, Predictable Revenue

Ross's framing is why pipeline coverage outranks closer skill on this list. A team with great closers and a 1.5x pipeline coverage will miss number every quarter; a team with average closers and a 4x coverage will hit consistently. The pipeline is the engine; the closing is the conversion of fuel into miles.

Benchmarks at a Glance

The table below shows healthy, watch, and fix bands for each of the six KPIs. Use it as starting calibration; B2B SaaS specifics are drawn from public benchmark data, and bands shift by segment and stage.

| KPI | What it measures | Healthy | Watch | Fix |

|---|---|---|---|---|

| Quota attainment | Percent of quota the team or rep achieves in a period | 80% or higher | 65% to 80% | Below 65% |

| Pipeline coverage ratio | Qualified pipeline value divided by quota | 3x to 4x | 2x to 3x | Below 2x |

| Win rate | Closed-won deals divided by total opportunities, segmented | 20% to 30% B2B SaaS | 12% to 20% | Below 12% |

| Average sales cycle length | Days from opportunity created to closed-won | 46 to 75 days B2B SaaS | 30 to 100 days | Above 100 days |

| Lead response time | Time from inbound lead arrival to first sales touch | Under 5 minutes | 5 to 30 minutes | Above 30 minutes |

| Sales Velocity | (Opportunities x win rate x ASP) / cycle length, expressed as $/day | Trending up quarter-over-quarter | Flat | Trending down |

Two cautions on the bands. First, win rate ranges assume mid-market B2B SaaS as the baseline; SMB-only teams should expect higher (30%+), enterprise-only teams should expect lower (8% to 15%). Second, sales cycle length is shorter for self-serve and product-led teams (often 7 to 30 days) and longer for complex enterprise (90 to 180+ days). Compare against your own historical baseline before comparing against industry averages.

Sales KPI Health Check

Type your numbers and see where each one sits against B2B SaaS benchmarks. The auto-calculated Sales Velocity is the headline number that tells you how much revenue your engine produces per day.

Type pipeline coverage, win rate, ASP, and cycle length to see your dollars-per-day output.

The widget above is the version we hand to teams that want to see how their inputs combine into Sales Velocity. The auto-calculated dollars-per-day output is the headline; the input rows tell you which lever to pull first. A 20% lift in pipeline coverage moves Velocity by 20%; cutting cycle length from 75 to 60 days moves Velocity by 25%. The widget makes those tradeoffs visible at a glance, which is the point.

Vanity Metrics Sales Teams Confuse for KPIs

Three numbers show up on most sales dashboards and do not belong as headline KPIs. Calls made and emails sent are activity metrics; they tell you the team is busy, not whether deals close. Demos booked measures inbound interest, not qualified pipeline. Total leads in CRM measures how full the database is, not how productive it is. The honest replacements live one or two steps down the funnel: opportunities created, MQLs converted, win rate by source.

The full pattern (and the way to clean up a sales dashboard that has drifted into vanity) sits in our vanity metrics deep dive. The shortcut here: if a number can move 50% next quarter without revenue or pipeline being measurably better, it is vanity, not a KPI.

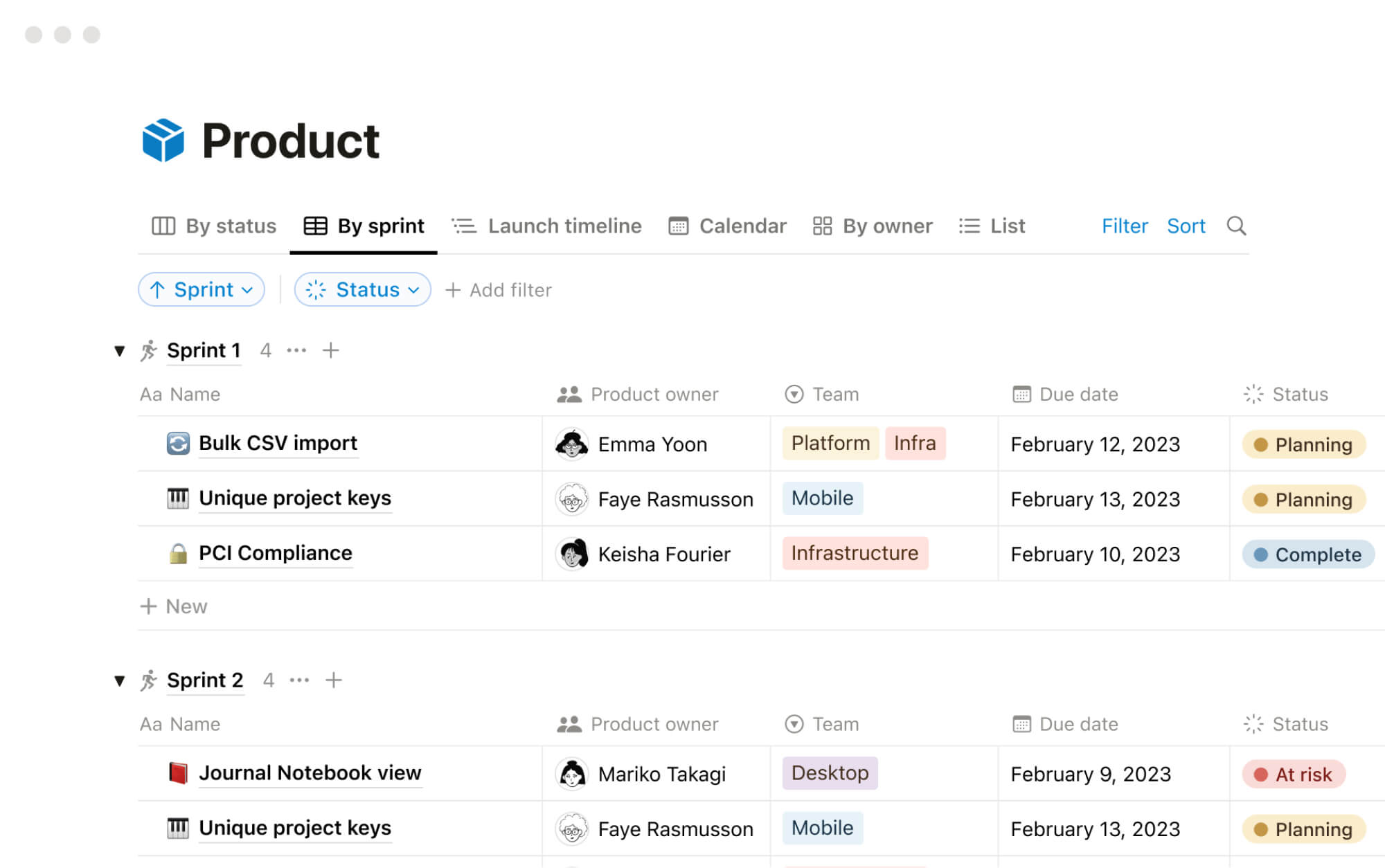

How to Set Up Your Sales Dashboard

The mechanics are straightforward; the discipline is in keeping the dashboard at six metrics. Five steps separate the sales teams that get value from KPI tracking from the ones that pile up half-watched dashboards.

- Start with Sales Velocity as the headline Sales Velocity is the single number that compresses the engine's health into one signal. Calculate it weekly using your current pipeline volume, win rate, ASP, and cycle length. Everything else on the dashboard either feeds Velocity or explains why it moved.

- Track the four inputs that move it Pipeline coverage tells you whether the top of the funnel is healthy. Win rate tells you whether qualification is working. ASP tells you whether the team is closing the right deals. Cycle length tells you whether the process is efficient. Move any one of those and Velocity moves.

- Add quota attainment and lead response time Quota attainment is the board-level scorecard; track it monthly. Lead response time is the conversion-killer hidden in plain sight. The difference between responding in 5 minutes vs 30 minutes is a 5x to 21x conversion multiplier per published research; track it daily and alert when the team drifts.

- Segment the win rate A blended company-wide win rate hides the truth. Split it by deal size (SMB / mid-market / enterprise), source (inbound / outbound / partner), and rep tenure. Each cut tells you whether to invest in better leads, better qualification, or better closing.

- Pin the dashboard inside the workflow A KPI dashboard that lives in a CRM or BI tool gets opened twice a quarter. Pin the same six metrics inside the workspace where the team chats and ships, with a Monday review on the calendar. The closer the metrics are to the daily pipeline conversation, the more likely the team will move them.

"Winning large customers is much more about causing a sale, not just catching one." - Trish Bertuzzi, The Sales Development Playbook

Bertuzzi's distinction (causing vs catching) is why pipeline coverage and lead response time outrank passive metrics like total leads. Causing a sale means actively engineering the pipeline through targeted outbound, fast inbound response, and disciplined qualification. Catching a sale means waiting for inbound and hoping the close cycle does the rest. The six KPIs above are designed to track the causing, not the catching.

Common Mistakes

The patterns below show up across sales teams that intend to track KPIs well and quietly drift back to activity metrics or vanity. Most are political or process problems, not analytical ones.

- Tracking activity instead of outcome Calls made, demos booked, emails sent, and meetings held are activity metrics, not KPIs. They tell you the team is busy, not whether deals are closing. Track them as inputs to coach behavior; never as the headline metric the dashboard reports up.

- Reporting a blended win rate A 22% company-wide win rate hides the truth. SMB might close at 35%, enterprise at 8%, and the average tells nobody what to do. Always segment by deal size, source, and rep before reporting; otherwise the metric drives no decision.

- Letting pipeline coverage drift below 3x Pipeline coverage is the leading indicator that runs ahead of quota. Below 3x, the team is mathematically unlikely to hit number this quarter, no matter how good closers are. Watch coverage weekly; treat any week below 2.5x as a fire drill, not a wait-and-see.

- Ignoring lead response time Studies show responding to inbound leads under 5 minutes vs 30 minutes is a 5 to 21x conversion multiplier. Most sales teams know this and still let response time drift to hours. Build the alert into the workflow; do not rely on willpower.

- Tying compensation to revenue alone Compensation tied solely to closed revenue creates short-cycle, deal-at-any-cost behavior. Margin gets sacrificed for the close; the wrong customers come in. The cleaner pattern: compensation tied to a blend of revenue and either gross margin or LTV-adjusted contribution.

- No owner per KPI When pipeline coverage is "the team's responsibility" and win rate is "everyone's job," nobody fixes the trend on the day it slips. Each KPI needs a single named owner whose quarter rides on it. Shared ownership is the same as no ownership.

The biggest of these is the activity-as-KPI trap. Sales managers often track calls and demos because they are easy to count and feel like they correlate with results. They correlate weakly at best; what predicts revenue is qualified pipeline coverage and win rate by segment. Coach activity at the rep level; report outcomes at the dashboard level.

What We Recommend

At Rock we run sales teams on a pinned KPI note inside the same workspace where pipeline reviews and call recordings live, with deals tracked in Tasks and weekly reviews happening in team chat. The six KPIs sit at the top with their bands; below that, each KPI links to the deals and tasks that move it. Owners post one-line updates on Mondays for any KPI outside its band, and quarterly recalibration retires anything the team has not acted on.

The reason for keeping the dashboard inside the workspace where pipeline conversations happen is the same failure mode that hits marketing and agency teams. CRM dashboards open twice a quarter at the all-hands; KPI notes pinned next to the deal-by-deal Monday review stay visible, get debated, and actually drive action.

Pair this with the broader cluster and the six KPIs become the connective tissue between effort and revenue. The marketing KPIs piece covers what feeds the top of the funnel (LTV:CAC ratio is the marketing-side composite that mirrors Sales Velocity here). The agency KPIs spoke covers service-business numbers if your sales motion is consultative; billable hours sits below as the operational input layer. The KPI framework covers the discipline of what counts as a KPI; vanity metrics covers what to cut. The OKR vs KPI bridge and OKR framework cover when to drive change versus hold a standard. Above the dashboard layer, SWOT, Strategic Choice Cascade, and PESTEL set the strategic direction.

Track the six alongside the pipeline that produces them. Rock combines chat, tasks, and notes in one workspace. One flat price, unlimited users. Get started for free.